How Accurate Are AI Calorie Counters?

How Accurate Are AI Calorie Counters?

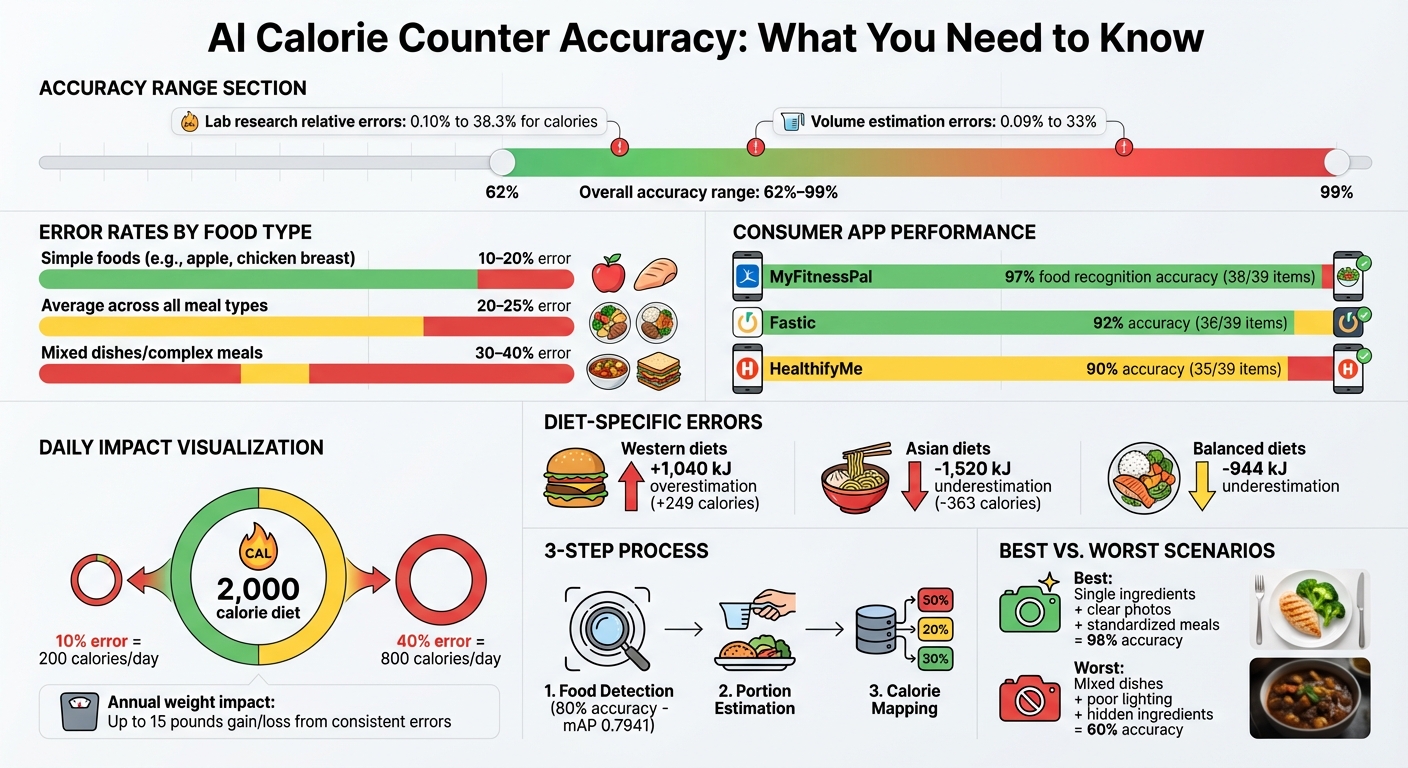

AI calorie counters simplify tracking your meals, but their accuracy depends on the food's complexity and the quality of your photo. For single-ingredient items like an apple, they can be highly precise, with error rates as low as 10%. However, for mixed dishes, errors can climb to 30–40%, leading to significant discrepancies in calorie estimates. Even with these challenges, they outperform manual logging, which is often less reliable.

Key Takeaways:

- Accuracy Range: 62%–99% depending on food type and conditions.

- Best Use: Simple foods or standardized meals (e.g., restaurant items).

- Challenges: Mixed dishes, hidden ingredients, and poor photo quality.

- Impact: Errors of 200–600 calories/day could affect weight goals over time.

While these tools save time, they’re not perfect. Use them as a guide, especially for long-term averages, and double-check estimates for complex meals.

AI Calorie Counter Accuracy Rates and Error Ranges by Food Type

I Tested AI Meal Scans, and The Results Are SHOCKING!

How AI Calorie Counters Work

When it comes to turning meal photos into nutritional data, AI calorie counters rely on a three-step process: food detection, portion estimation, and calorie mapping. Each step plays a crucial role, and errors along the way can affect the final results.

Food Detection and Recognition

The first task is identifying the foods in the image. AI systems use convolutional neural networks (CNNs) like YOLO or Faster R-CNN to separate the food from the background and any utensils. These systems are trained on massive datasets where food items are labeled with bounding boxes or masks. For example, a system developed by NYU Tandon achieved a mean average precision (mAP) of 0.7941 at an intersection over union (IoU) of 0.5, meaning it correctly identified foods about 80% of the time.

Getting the food labels right is essential. Mislabeling can lead to substantial calorie errors. Even with over 90% accuracy in identifying food components, some systems still make significant mistakes. For instance, one app correctly labeled a boiled egg but overestimated its calorie count by 37% due to a mismatch in the database entry.

Once the system identifies the food, the next step is figuring out how much of it is on the plate.

Portion Size and Volume Estimation

After recognizing the food, AI systems estimate the portion size. This involves calculating the area each food occupies in the image and converting that 2D area into a 3D volume or weight. To do this, systems use geometric models and assumptions about food density. Many apps rely on reference objects, like a U.S. credit card or a quarter, to determine scale. Others use techniques like monocular depth estimation or fit geometric shapes - cylinders for cups of rice, spheres for meatballs, or prisms for brownies - to approximate volume. Consumer apps often incorporate camera metadata and standard plate or utensil sizes to refine these estimates.

A review of AI dietary tools found that volume estimation errors ranged from 0.09% to 33%, with similar ranges for calorie estimates (0.10% to 38.3%). Simpler foods, like an apple or a slice of bread, tend to produce more accurate results compared to complex dishes. Apps such as What The Food use these methods to present serving sizes in familiar U.S. units like cups or ounces, while performing precise internal calculations in grams.

With the identity and quantity established, the system moves on to nutritional analysis.

Calorie Mapping and Nutritional Analysis

In the final step, the system matches the identified foods and their estimated portions to nutritional databases. Most U.S.-based tools rely on resources like USDA FoodData Central, which provides nutrient values per 100 grams or per common serving size. The AI selects the closest match - for example, linking "red apple, with skin" to its database entry - and calculates calories and macronutrients by multiplying the estimated weight or volume by the nutrient values. It then sums up the totals for all items on the plate. For example, the NYU Tandon model estimated 317 kcal for a pizza slice (10 g protein, 40 g carbohydrates, 13 g fat) and 221 kcal for idli sambar (7 g protein, 46 g carbohydrates, 1 g fat), closely aligning with reference values.

However, database mismatches pose a significant challenge. Generic entries for restaurant dishes, variations in cooking methods (like grilled versus fried), and missing regional foods can lead to inaccuracies. To address these issues, modern AI calorie counters are incorporating multimodal approaches. These combine image analysis with user input - such as confirming cooking methods or selecting specific restaurants - and historical data to improve predictions and minimize errors.

Research Findings: AI Calorie Counter Accuracy

Academic Study Results

Lab research reveals that AI calorie estimation accuracy ranges from 62% to 99%, with relative errors spanning from 0.10% to 38.3% for calorie counts and 0.09% to 33% for food volume. To put this into context, a 99% accuracy rate would mean an error of about 20 calories per day on a 2,000-calorie diet. On the other hand, 62% accuracy could lead to errors as high as 760 calories per day.

In controlled conditions, the NYU Tandon system showed strong results, achieving a mean average precision (mAP) of 0.7941 at an Intersection over Union (IoU) of 0.5. This indicates it correctly identified food items approximately 80% of the time. The system's calorie estimates aligned closely with reference values across various food categories. Notably, simpler foods like apples had lower error rates compared to more complex, mixed dishes.

Consumer App Testing Results

Testing consumer apps in real-world scenarios highlighted more variability. For instance, one study found that MyFitnessPal (version 24.10.0) achieved a food recognition accuracy of 97%, correctly identifying 38 out of 39 items. Fastic followed at 92% (36 out of 39), and HealthifyMe scored 90% (35 out of 39). However, accurate food identification didn’t always equate to precise calorie calculations. On average, apps overestimated energy for Western diets by 1,040 kJ and underestimated energy for Asian diets by 1,520 kJ. Individual food items saw discrepancies ranging from 5% to 37% in overestimation and 12% to 16% in underestimation. Additionally, a new AI tool developed by NRC Canada improved calorie prediction accuracy by 25.5% compared to earlier models.

User Study Data

Real-world user studies shed light on how these accuracy levels affect daily use. In supervised tests with dietetics students, apps with AI features consistently overestimated energy intake for Western diets by 1,040 kJ, underestimated Asian diets by 1,520 kJ, and underreported calories for balanced diets by 944 kJ. While AI-powered image recognition reliably identified individual food components, it struggled to determine calorie counts for mixed dishes from different cuisines. These findings suggest that AI calorie counters are more effective when users focus on multi-day or weekly averages rather than relying on the accuracy of single-meal estimates.

sbb-itb-034be4e

Factors That Affect AI Calorie Accuracy

Technical Limitations

AI calorie estimation faces challenges due to limited and imbalanced datasets. Many models are trained using data that primarily focuses on Western, single-item foods - like an apple or a slice of pizza - while underrepresenting mixed dishes, home-cooked meals, and non-Western cuisines. This bias results in notable inaccuracies, such as an average overestimate of 249 calories for Western diets and an underestimate of 363 calories for Asian diets.

Visual similarities between foods add another layer of difficulty. For example, mashed potatoes and cauliflower mash or regular cheese and its low-fat version can look nearly identical in photos, even though their calorie counts differ significantly. This can lead to wide variations in calorie estimates for foods that appear similar.

Another issue lies in mismatches within nutrition databases. Even when an AI correctly identifies a food item, such as grilled chicken, discrepancies in database entries - like whether the chicken was cooked with or without oil, or differences between restaurant and home-cooked portions - can result in inaccurate calorie estimates. These technical hurdles highlight how environmental factors and user practices further influence accuracy.

Environmental and User Factors

The quality of photos plays a major role in AI performance. Poor lighting, cluttered backgrounds, or angled shots can make it harder for the AI to identify food boundaries and estimate portion sizes. Cropped images or overlapping foods can also obscure reference points needed for accurate volume calculations.

User behavior is just as critical. Clear, well-lit photos taken from a centered angle can keep errors for simpler foods within a 10–20% range. On the other hand, mistakes like logging "fried chicken" instead of "grilled chicken" can lead to cumulative inaccuracies over time. Apps like What The Food address these issues by combining automatic detection with manual overrides, allowing users to confirm or adjust entries before saving their meals. These external factors underline the importance of refining AI systems to handle real-world complexities.

Recent Improvements in AI Technology

Recent advancements have tackled many of these challenges, making calorie estimation more precise. Multi-angle imaging, which involves taking multiple photos from different viewpoints, helps AI create a more accurate 3D representation of foods. This method is especially useful for irregular items like salads or stews. Similarly, depth-sensing technologies - such as smartphone LiDAR or dual cameras - offer explicit distance measurements, reducing reliance on visual approximations.

In 2024, a research tool that combined enhanced computer vision with improved nutrition prediction achieved a 25.5% increase in accuracy for estimating calories, mass, and macronutrients from images. Additionally, multimodal models that integrate image analysis with text descriptions (e.g., "grilled, no oil") capture preparation details that photos alone might miss, leading to even more refined calorie estimates.

When AI Calorie Counters Work Best and Where They Struggle

High-Accuracy Scenarios

AI calorie counters excel when dealing with single-ingredient foods like a plain apple or a chicken breast. These foods are straightforward, with clear portion sizes and ingredients, making it easier for the AI to provide reliable calorie estimates. Similarly, standardized meals from restaurants - think a McDonald's burger or a Chipotle bowl - are another area where AI performs well. Their consistent serving sizes and well-documented nutritional data ensure accurate results.

The quality of the photo plays a big role, too. Bright, well-lit, overhead images make it easier for the AI to identify food boundaries and estimate portions. Under ideal conditions, tools like What The Food’s AI analyzer can achieve an accuracy rate of about 98% for common foods.

Foods with distinct shapes and textures also help the AI perform better, as they align closely with the training data. Including reference objects, like utensils or the plate itself, further improves the AI’s ability to estimate portion sizes accurately.

Low-Accuracy Scenarios

AI calorie counters face challenges when analyzing mixed dishes or homemade meals where ingredients aren’t immediately visible. Complex dishes - such as casseroles, layered salads, or stir-fries - often lead to inaccurate estimates. Hidden components like oils or sauces, which are calorie-dense but hard to detect, add to the difficulty.

Poor photo quality is another issue. Side angles, shadows, or cluttered backgrounds can obscure the food, making it harder for the AI to analyze. User errors - like including fingers, napkins, or cropping out parts of the meal - also reduce accuracy. Sauces, gravies, and other intricate additions further complicate the process since they’re not always visible but can significantly impact calorie counts.

These limitations directly influence the accuracy of calorie estimates, especially for more complex meals.

Error Ranges and Their Impact

The variability in accuracy leads to a range of potential errors in calorie tracking. For simple foods with clear photos, AI calorie counters typically have an error range of 10–20%. However, for complex or mixed dishes, errors can jump to 30–40% or more. On average, across all meal types, the error rate tends to fall between 20–25%.

To put this into perspective, on a 2,000-calorie daily diet, a 10–40% error equates to a discrepancy of 200–600 calories. Over time, consistent overestimations could lead to an unintended weight gain of about 15 pounds in a year. On the flip side, these errors could also slow weight loss progress by around 0.5–1 pound per week if calorie intake is underestimated.

Despite these challenges, AI calorie counters still outperform manual logging, which typically achieves only 60–70% accuracy. For long-term success, users should double-check estimates for complex meals to keep their tracking as accurate as possible.

Conclusion

AI-powered calorie counters make tracking your nutrition easier by cutting out the need for tedious manual entries. They shine when it comes to straightforward meals, offering a level of consistency that's crucial for sticking to long-term health goals. For instance, tools like What The Food can transform a simple photo of your meal into detailed nutritional data in just seconds. Their speed and convenience are undeniable, but they do have their limits.

No calorie-tracking app is flawless, particularly when dealing with complex meals that include hidden ingredients or a mix of components. Even traditional food labels can be off by up to 20%, so expecting pinpoint accuracy isn’t realistic. Instead, think of AI-generated estimates as a helpful guide within a reasonable margin of error.

FAQs

How can I make AI calorie counters more accurate for complex meals?

To make AI calorie counters more accurate for complex meals, start by snapping clear, well-lit photos of your food. Make sure the image shows all the ingredients and portions clearly. If your meal includes several components, try uploading multiple images from different angles to give the AI a more complete view.

These tools often rely on plate size and food volume to estimate portions, so close-up images with clear details can make a big difference. For added accuracy, you can manually review or adjust the ingredient details after the AI's initial analysis. Combining AI with your input can lead to much better results, especially for intricate dishes.

What affects the accuracy of AI calorie counters?

The precision of AI calorie counters depends on a variety of factors. One major influence is image quality - photos that are blurry or poorly lit can make it tough for the AI to correctly identify the food in question. Another challenge comes from complex dishes or mixed meals, where separating and analyzing individual ingredients becomes tricky. On top of that, accurately estimating portion sizes is a frequent hurdle, especially when serving sizes or food presentation vary widely.

Other elements, such as unusual lighting or unconventional food arrangements, can also affect accuracy. While these tools continue to advance, such variables can still lead to occasional miscalculations in calorie estimation.

How reliable are AI-powered calorie counters compared to manual tracking?

AI-powered calorie counters, such as What The Food, are impressively accurate, boasting up to 98% accuracy for many everyday foods. This level of precision often surpasses manual tracking, which can be prone to mistakes, guesswork, or even underreporting.

That said, their accuracy can vary depending on factors like how complex the dish is and the quality of the food photo you upload. To get the best results, make sure your images are well-lit and clearly show the meal you're analyzing.