Calorie Estimation Algorithms: How They Work

Calorie Estimation Algorithms: How They Work

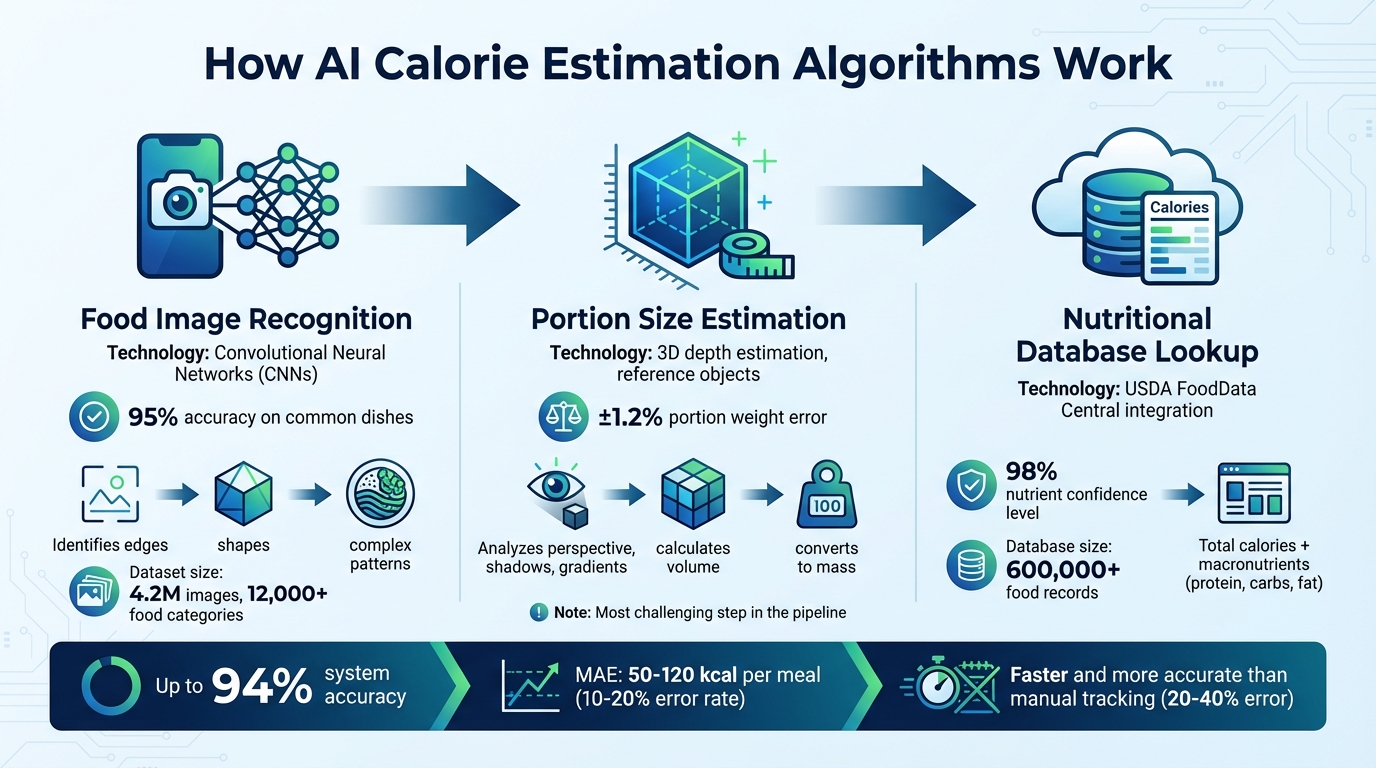

Calorie estimation algorithms analyze food photos to calculate nutritional content using AI and machine learning. These systems identify food items, estimate portion sizes with 3D analysis, and match data to trusted nutritional databases like USDA FoodData Central. They are faster and more accurate than manual tracking, reducing errors and saving time.

Key Points:

- Steps: Food recognition, portion estimation, database mapping.

- Accuracy: Up to 94%, with portion weight errors as low as ±1.2%.

- Technology: Convolutional Neural Networks (CNNs), 3D depth estimation.

- Challenges: Hidden ingredients, portion size errors, and mixed dishes.

- Applications: Apps like "What The Food" provide detailed calorie and nutrient data in seconds, improving user experience and consistency.

These algorithms make tracking food intake easier, offering a faster and more precise alternative to manual methods.

How AI Calorie Estimation Algorithms Work: 3-Step Process

Core Components of Calorie Estimation Algorithms

Food Image Recognition with Neural Networks

Convolutional Neural Networks (CNNs) play a central role in food recognition by analyzing visual features step by step. Early layers focus on basic patterns like edges and textures, middle layers identify shapes, and deeper layers detect complex patterns such as "pasta" or "grilled chicken". This layered approach helps the system differentiate between visually similar foods - like distinguishing white rice from mashed potatoes based on texture.

These systems rely on object detection to identify multiple items on a single plate. For instance, they can separate salmon from a side salad by localizing each item with bounding boxes, assigning labels with confidence scores, and calculating dimensions. The system then pulls nutritional data from a database. To improve accuracy, these models are often fine-tuned with food-specific image datasets that include variations like scaling and rotation.

"The overall system accuracy is bounded by the weakest stage - which is why apps using a high-quality nutritional database but a poor classification model still produce inaccurate results." - AI Food Tracker

Accurate food image recognition forms the foundation for training on large-scale datasets, ensuring better performance in real-world applications.

Training on Large-Scale Food Datasets

Once image recognition is in place, algorithms improve further through training on extensive food datasets. The size and diversity of these datasets directly influence how well the model performs. For example, PlateLens uses a dataset of 4.2 million images covering over 12,000 food categories. In contrast, academic datasets like Food-101 and VIREO Food-172 are much smaller, with 101,000 and 110,000 images respectively. This scale difference often gives commercial systems an edge in real-world performance.

Human annotators play a critical role in preparing these datasets by labeling food types and drawing bounding boxes around individual items. To ensure global applicability, datasets include a wide range of cuisines - like sushi, tacos, and tikka masala. Models are also updated periodically with user feedback and new food trends, keeping them accurate over time. Today’s top systems can achieve 95% accuracy on commonly recognized dishes.

Portion Size Estimation Techniques

Portion size estimation remains one of the toughest challenges. Basic methods use lookup tables with average USDA weights for each food type, but this approach often leads to errors when actual portions differ from the averages.

More advanced techniques incorporate reference objects like plates or utensils to determine real-world scale, helping calculate the dimensions of food items. Cutting-edge systems take it further by using 3D depth estimation. By analyzing monocular cues such as perspective, shadows, and gradients, these systems can estimate the height and volume of food. Once volume is determined, it’s converted to mass using density data from USDA FoodData Central. These methods significantly reduce errors compared to traditional approaches.

"Portion estimation is the hardest part of the pipeline and where most of the ongoing research focuses." - CalorieCue Team

Accurate portion size estimation is critical for generating reliable nutritional data, making it a key area of research and development.

sbb-itb-034be4e

Integration with Nutritional Databases

Mapping Food Items to Nutritional Data

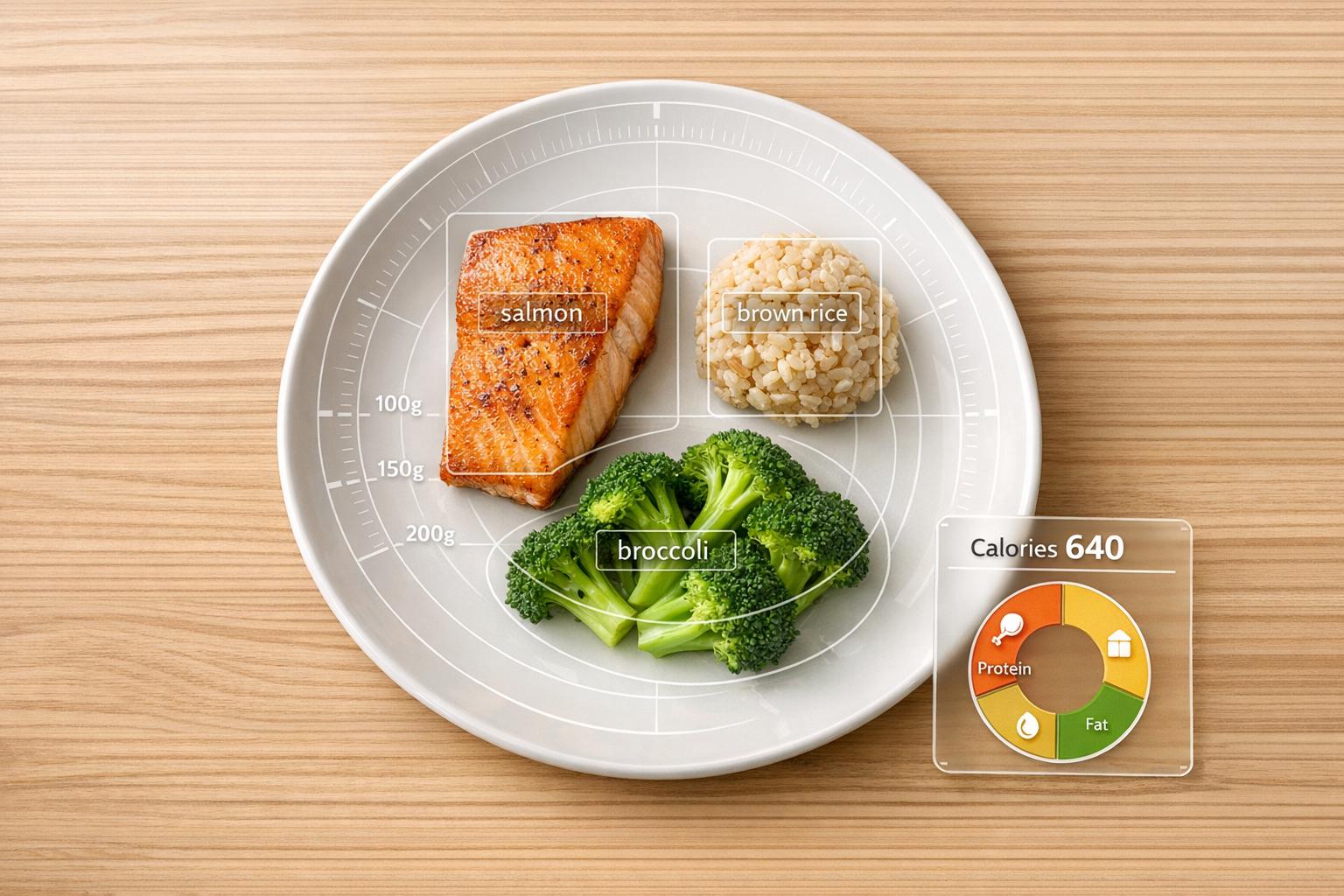

After identifying a food item and estimating its portion size, the algorithm links this visual information to nutritional values through a detailed multi-step process that ends with a precise database lookup.

It uses taxonomy mapping to refine broad categories (like "protein") into specific items (such as "pan-seared salmon"). Contextual inference also plays a role, identifying ingredient combinations - like tortillas, beans, and salsa - to deduce meals such as a "burrito bowl".

The system relies on trusted databases like USDA FoodData Central, which houses over 600,000 food records with lab-verified nutrient data. AI-enhanced databases can surpass 500,000 entries and include details for variations like cooking methods (e.g., grilling versus frying) and regional recipe differences. According to What The Food, their approach achieves a 98% nutrient confidence level when validated against these databases.

This precise mapping ensures the foundation for accurate calorie and macronutrient calculations.

Calculating Total Calories and Macronutrients

Once food items are matched to nutritional data, the algorithm calculates total calories and macronutrients by converting portion sizes into measurable values.

It starts by estimating the food's volume (in cm³) and converts this to weight using density values specific to each type of food. Calories are then calculated by multiplying the weight (in grams) by the nutrient value per gram, following the Atwater method for proteins, carbohydrates, fats, and alcohol.

For meals with multiple components, the system calculates nutritional values for each item individually. Modern APIs provide detailed JSON data, such as "nutrition_per_100g" and "selected_portion_nutrition", to clarify each step of the conversion process. These individual values are then summed to deliver a complete nutritional breakdown.

Users are encouraged to review the results and manually add any hidden ingredients, such as oils, butter, or dressings, that the system might miss. This "human-in-the-loop" approach not only helps correct potential errors - like distinguishing white rice from brown rice - but also improves the algorithm through user feedback.

Evaluating the Accuracy of Calorie Estimation Algorithms

Accuracy Metrics: MAE, MAPE, and More

To gauge how well calorie estimation algorithms perform in practical settings, researchers rely on several key metrics.

Mean Absolute Error (MAE) measures the average difference - expressed in kilocalories - between the algorithm's predictions and the actual calorie values. Cutting-edge models currently achieve an MAE ranging from 50 to 120 kcal per meal, which corresponds to a 10–20% error rate. Mean Absolute Percentage Error (MAPE), on the other hand, calculates the average percentage difference between the predicted and actual values. This metric is particularly useful for comparing performance across meals of varying sizes.

Another important metric is the Within-X% Rate, which evaluates how often an algorithm's prediction falls within a specific margin - like 10% or 20% - of the true calorie value. For instance, as of March 2026, the AI-powered tracker Nutrola achieved a MAPE of 8.4% and a "Within-10% Rate" of 72% during independent testing. For comparison, manual calorie tracking typically yields a MAPE between 20% and 40%.

Other metrics include Top-1 and Top-5 Accuracy, which assess how often the correct food category appears as the first or among the top five predictions. Additionally, Portion Estimation Error measures discrepancies in grams or as a percentage when estimating food volume or mass. While these metrics provide a snapshot of algorithm performance, challenges in real-world applications introduce complexities that impact these numbers.

Accuracy Challenges and Recent Improvements

Despite advancements, real-world challenges continue to limit the accuracy of calorie estimation algorithms. One major hurdle is occlusion, where hidden ingredients - like those in casseroles, stews, or burritos - cannot be identified from surface-level images. Fat detection remains another weak spot for these systems, with error rates often higher than those for proteins or carbohydrates. This is largely because fats, such as oils or butter, are difficult to visually detect in photos.

Portion estimation is widely regarded as the most difficult step in the process. Traditional 2D methods struggle to account for food height and density, leading to inaccuracies. For instance, apps relying on basic lookup tables for portion sizes often produce a MAPE of 18% to 34%. Visual ambiguity adds further complexity, as foods with similar appearances - like mashed potatoes and cauliflower mash - can easily be misclassified.

To illustrate these challenges, here’s a breakdown of MAPE ranges and associated difficulties across various cuisines:

| Cuisine Category | Average MAPE | Primary Challenge |

|---|---|---|

| Simple Items | 4.8% - 10.3% | Easier for AI; single-item identification |

| American/Western | 6.1% - 12.4% | Well-represented in training data |

| East Asian | 9.2% - 22.5% | Complexity of mixed ingredients |

| South Asian | 10.1% - 25.3% | Hidden fats (e.g., ghee, oils) in sauces |

| Complex Meals | 11.3% - 27.1% | Multiple components and portion size overlap |

Recent technological advancements are beginning to address these issues. 3D sensing tools, for example, are replacing traditional 2D image analysis. By leveraging monocular depth cues, LiDAR, and structured light, these systems can more accurately estimate food volume and portion sizes. Additionally, multimodal learning integrates image data with natural language processing, using meal descriptions and contextual factors like time of day or location to refine calorie predictions. Another improvement involves switching to professionally verified databases, such as USDA FoodData Central, which helps reduce errors associated with community-sourced data. These innovations are paving the way for more precise calorie estimation technologies in the future.

What The Food: A Practical Application of Calorie Estimation

Key Features and Benefits

What The Food demonstrates how calorie estimation algorithms can work in real life by using a multi-stage AI pipeline. This system identifies food items, estimates portions, and calculates nutritional data - all from a single photo. The app uses advanced food detection and nutrient mapping technology to provide accurate insights. With the ability to recognize over 10,000 food items from various cuisines, it delivers a detailed macro breakdown within seconds. Users can view both macronutrients (protein, carbs, fat) and micronutrients, with a 98% confidence level in nutrient accuracy, thanks to verified food databases.

One standout feature is its recipe analyzer, which identifies ingredients from photos and generates step-by-step cooking instructions, complete with nutritional facts per serving. Additionally, its keto meal planner offers personalized weekly meal plans, tailored to individual goals, dietary restrictions, and allergies.

How It Works: Step-by-Step User Experience

The app’s technology translates into an intuitive and straightforward experience. Users simply upload or take a photo of their meal, and the AI quickly identifies the food, estimates portion sizes, and provides a full nutritional breakdown - all in just seconds. Each analyzed meal is saved in a scan history, allowing users to monitor their eating habits over time and develop healthier dietary patterns.

Gemma Ray, RDN and Sports Nutrition Partner, highlights its value, saying:

"The accuracy and clarity help our athletes understand what fuels their performance. It's like having a dietitian assistant available 24/7".

Free Access and User-Friendly Design

In addition to its advanced features, What The Food is designed to be accessible and easy to use. It offers a free tier that includes automatic food logging, meal history tracking, and a recipe saver. Users can try the app for free before opting for the premium plan, which costs $9.99 per month.

So far, the app has analyzed over 150,000 meals, is used in more than 180 countries, and holds an impressive user rating of 4.95 out of 5. Irina D'Amore shares her thoughts:

"Instant AI offers impressive food detection and nutrition analysis from photos - fast, accurate, and incredibly convenient for mindful eating on the go".

Conclusion: The Future of Calorie Estimation Technology

Key Takeaways

Calorie estimation technology has come a long way, blending neural networks, extensive food datasets, and nutritional databases to analyze food photos and deliver detailed macro breakdowns. These AI systems have surpassed old manual methods, offering nutritional data within seconds from just a photo. This makes health tracking faster and more convenient than ever.

The process typically involves three steps: identifying food items through image recognition, estimating portion sizes based on visual cues, and matching the data to trusted sources like the USDA FoodData Central. While current models still face challenges with hidden ingredients (like oils, butter, and sauces) and complex mixed dishes, they’ve made nutrition tracking more accessible to the average person.

With these advancements as a foundation, the next wave of calorie estimation technology is set to push accuracy and personalization even further.

What's Next for Calorie Estimation

The future of calorie tracking is all about precision and personalization. New technologies like depth-sensing and 3D volume calculations are expected to address portion estimation issues, achieving error rates as low as ±1.2%. These innovations could become commercially available within the next 2–4 years.

Personalization will also take center stage. Future systems will incorporate factors like metabolic responses, health conditions, and even genetic data to deliver tailored dietary advice. By integrating AI with wearable devices, users can expect real-time dietary feedback that combines nutrition insights with data on sleep, activity, and other health metrics. Features like augmented reality overlays and instant coaching could soon help users make healthier meal choices on the spot.

Machine learning will continue to enhance these systems by analyzing long-term eating habits, predicting potential health risks, and suggesting preventive dietary changes. Additionally, on-device processing will improve privacy and speed, allowing complex analyses to happen directly on users' devices without relying on internet connections.

Food Recognition and Calorie Estimation | Machine Learning projects 2024 2025

FAQs

Why is portion size the hardest part to estimate from a photo?

Estimating portion sizes from a photo is tricky because it involves figuring out both the volume and weight of the food. Challenges arise when items overlap, dishes are visually complex, or key details aren't clear in the image. On top of that, photos often lack depth and scale - two critical elements needed for precise portion measurements. These limitations make it tough for AI to get it just right.

How can I improve calorie accuracy when foods have hidden oils or sauces?

To get more precise calorie estimates, focus on taking clear, close-up photos of your food from several angles. This can help the AI catch hidden ingredients like oils or sauces that might otherwise go unnoticed. If the app allows, include detailed descriptions or notes about the ingredients when you upload your images.

For even better accuracy, manually tweak the calorie estimates if the app provides that option. Keep in mind that AI systems work based on what they can see and the data they have, so taking time to review and adjust regularly can make a big difference in the long run.

What does “94% accurate” mean for a single meal in calories?

The phrase "94% accurate" indicates that the AI's calorie estimate is typically within 6% of the meal's actual calorie count. This showcases a strong ability to calculate nutritional details based on the given data.